Christy Hovanetz, Ph.D., is a Senior Policy Fellow for ExcelinEd focusing on school accountability and math policies.

Last week, ExcelinEd responded to a request for information from the United States Senate Committee on Health, Education, Labor and Pensions (HELP) on federal and state K-12 accountability systems. Below is the text of ExcelinEd’s Senior Policy Fellow Christy Hovanetz’s response.

Download the response as a PDF.

Request for Information – February 13, 2026

The HELP Committee is committed to advancing bipartisan solutions to the achievement crisis facing K-12 education in the U.S. To that end, the Committee invites responses to the questions below, legislative solutions, and any other insights on how the federal government can support states, districts, and schools as they advance pro-student and pro-family policies. Please submit feedback and comments to K12Growth@help.senate.gov by Friday, February 13, 2026.

ExcelinEd is a national 501(c)(3) organization that supports state leaders and policymakers to advance student-centered K-12 education policies with a focus on educational quality, innovation and opportunity. ExcelinEd convenes thought leaders, conducts state-specific research and provides deep policy expertise and implementation support to strengthen educational outcomes across the United States.

For more information, contact Senior Policy Fellow Christy Hovanetz, Ph.D. at Christy@ExcelinEd.org and 850.212.0243

The opening statement of the Request for Information correctly observes that the American education system too often falls short for the students who need it most. In practice, many states’ growth models perpetuate this issue by institutionalizing the “soft bigotry of low expectations.”

Few states use growth models that explicitly measure whether a student mastered more grade level content this year than last year.

Instead, states rely on growth models with low expectations and peer-to-peer comparisons, meaning that even if a student meets expectations each year, that growth may still not result in reaching grade level proficiency. Yet a school can earn credit and be deemed successful for students meeting those low expectations.

This is not fair to students.

The goal of Title I is to ensure all students receive a fair, equitable, and high-quality education. Accomplishing this requires more than additional funding; it also requires holding all students to the same rigorous expectations. When student growth is measured, the principles of “fair” and “equitable” are imperative to delivering a “high-quality” education to all students.

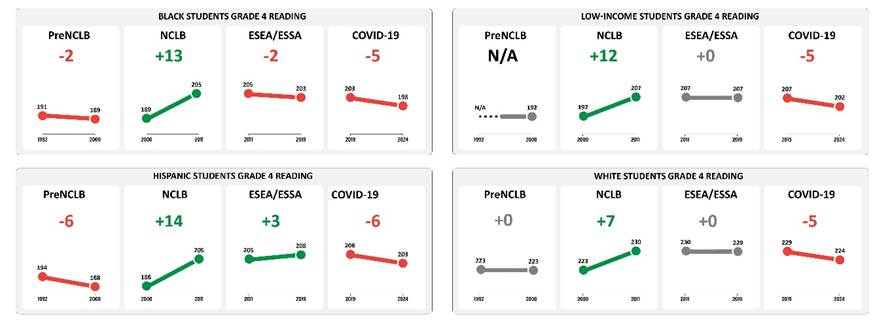

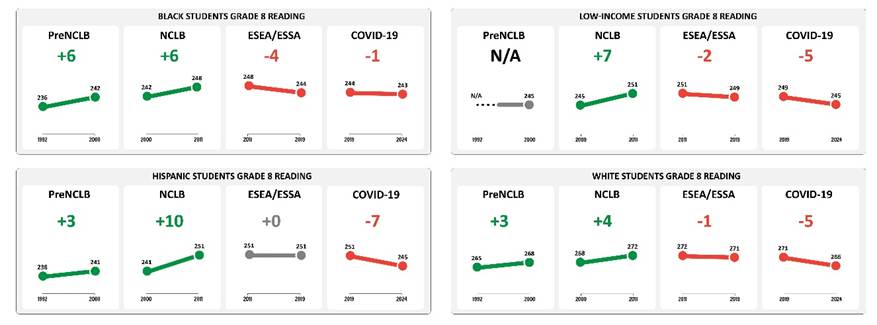

It is also worth remembering that the most significant improvements in student outcomes took place when the focus was on student proficiency for the purpose of school accountability. Under the federal law No Child Left Behind (NCLB), which was in place in the early 2000s, schools were expected to have 100% of students reach proficiency in reading and math, meaning all students were held to the same grade level expectations—student growth was not a factor. While states did not reach 100% proficiency, they made their greatest gains, narrowed achievement gaps, and came closer to that 100% goal than at any other time.

Since NCLB was replaced, expectations for 100% proficiency are no longer the focal point. As a result, national test scores stagnated and began a gradual decline. COVID exacerbated that trend.

Grade 4 Math

Grade 8 Math

Grade 4 Reading

Grade 8 Reading

Growth was first introduced into federal accountability as a waiver in 2011, and states were allowed to use models that measure “growth to proficiency” within a specified amount of time or by a specific grade. The “growth to proficiency” model was established as a relief from proficiency-only measures, but because of its rigor, it did not result in many additional schools meeting Adequate Yearly Progress (AYP) requirements.

Growth models later became one of the primary indicators added during reauthorization, resulting in the Elementary and Secondary Education Act (ESEA). However, no guardrails were implemented through law to ensure growth models measured meaningful progress toward grade-level proficiency.

While a few states implemented rigorous, criterion-based expectations for growth, most others did not—mainly because federal regulations cited the normative Students Growth Percentiles (SGP) as an ‘approvable’ option. States eager for relief from proficiency adopted SGP with limited exploration. Fortunately, Florida, Mississippi and a few other states had already implemented their own criterion-based method for measuring growth, ensuring that any growth the school awarded credit for was meaningful for moving students toward grade-level proficiency. Student improvements on NAEP in these states support the merits, and need, for using criterion-based growth models.

A fair measure of growth requires holding all students to the same high expectations and ensuring that meeting those expectations moves students closer to reaching grade-level proficiency.

It is not fair to students to base expectations on their peers’ performance, set different expectations based on characteristics, or set low expectations that do not reflect mastery of state academic content standards.

Any attempt to mitigate, excuse or adjust growth expectations based on student characteristics or relative performance only serves to perpetuate a system of low expectations.

Students who are the furthest behind may need the most support to catch up, but we should not expect less of these students, instead, as Title I directs, we should provide more support to uphold the promise of a fair, equitable, high-quality education for all students.

Fairness relies on administering the same statewide assessments in reading and math. Valid, comparable, statewide assessment data are the foundation of a strong growth calculation. There is no substitute for uniform assessments that allow true apples-to-apples comparisons across students, schools and districts.

States have learned a great deal from developing different measures of student growth.

There are two widely used methods for calculating student growth: criterion-based growth and norm-referenced growth.

Criterion-based growth measures each student’s progress toward specific learning goals. The focus is on whether a student is moving closer to meeting or exceeding state expectations. For example, a student who is behind must make a pre-determined amount of progress each year to reach proficiency, while a proficient student would aim to reach an advanced level. The key question is whether the student is making the progress needed to master the standards.

Norm-referenced growth, such as Student Growth Percentiles (SGP) and Value-Added Models (VAM), measures students against one another. It asks how a student’s progress compares to that of other students across the state. Because this approach is a comparison, there will be students determined to be “making growth” and others as “not making growth,” even if all students improve. In other words, even if every student in the state performs better than last year, some will still be deemed as “not making growth” simply because others improved more.

Meeting criterion-based growth means students are moving toward mastery of state academic content standards. Criterion-based growth models are the fairest models to use for school accountability, because all students can demonstrate growth and expectations are known and not dependent on the performance of others.

When measuring student growth, the central question should be whether a student mastered more grade level content this year than last year. Only criterion-based growth models can answer that question.

| Criterion Based Growth Models Growth Toward ProficiencyValue Table | Norm-referenced Growth Models Student Growth Percentiles (SGP)Value-Added Model (VAM) |

| Educators can compute and replicate growth calculations | Statisticians compute growth generally using a black-box proprietary formula and statewide student level data |

| Individual student learning expectations are set at the beginning of the year | Individual student learning expectations are determined after the student’s state assessment results are back |

| All students can demonstrate growth | Only some students can demonstrate growth – there will always be winners and losers no matter how much state results improve |

| Criteria for determining individual student growth is set, and expectations are known by students, parents, educators, policymakers and the public before testing | Criteria for determining individual student growth is determined after the test results are back so students, parents, educators, policymakers do not know how much a student must improve to demonstrate growth until after the school year is over |

| The improvement needed for demonstrating growth Is the same for each student | The improvement needed for demonstrating growth Is different for each student |

| Consistent expectations from year to year allow for longitudinal comparisons | Expectations for demonstrating growth change from year to year making longitudinal comparisons impossible |

| Expectations, if met each year, will result in proficient or advanced student achievement | Growth expectations, even if they are met each year, may not result in proficient or advanced student achievement |

Short summaries of three commonly used growth measures are provided in the final section.

The most important type of federal support is upholding the Every Student Succeeds Act (ESSA) requirement for states to annually administer the same standards-aligned assessments to all students. Without annual, comparable data for every student, growth cannot be measured.

State testing requirements provide the comparable data needed to hold schools accountable for supporting both growth and achievement of all students. This consistency produces data that can be validly compared across classrooms, schools and districts, which allows educators, administrators, and policymakers to make informed decisions about how to best target student support. Without this data, identifying needs and areas for resource allocation becomes troublesome.

Additionally, federal support requiring states to implement criterion-based growth models, set rigorous expectations, and ensure schools only earn credit when students master more state academic content standards during the current year versus the prior year, would ensure schools are accountable for all students reaching grade level expectations.

There are no federal policies standing in the way of states creating higher-quality growth measures. The only impediment is the political will to hold schools to higher expectations, ensuring all students reach grade-level proficiency.

States have learned—through polling, focus groups and anecdotes—that parents are generally unconcerned about “school-average growth” and mainly focus only on whether their child improved.

For parents to be interested in school level data, the measure must be easily understood. For example, informing parents that 65% of students made growth towards grade-level proficiency is a metric that resonates. Informing parents that the school-average growth is a value added score of 0.58 is hard to understand.

What is informative to parents is how many students are scoring at grade level and how many are getting closer to grade-level proficiency; parents want to understand how many students a school taught to become better readers and mathematicians.

Cross-state learning could begin with parent polls or focus groups to gather state-specific examples of how states communicate school performance with parents. Identifying which states effectively communicate results to parents, as well as knowing what parents value most, could lead to best-practice studies for funding and the creation of road maps for other states.

States have a decades-long history of reporting results about school performance through federally required school report cards. However, many parents lack interest or do not fully understand the information or its implications. Revising reporting systems to provide more easily consumed and relevant information tied to their child’s educational experience and delivered in a format beyond a static website, may increase parent engagement at the school level.

While parent and family engagement is crucial, it is equally important to provide clear, easily accessible information to educators, policymakers, business leaders, taxpayers and the public. Education outcomes affect individuals, but they also greatly impact the productivity and prosperity of the nation.

States should not be required to test students in grades K-2.

In early elementary school, academic and skill development varies widely based on kindergarten readiness, age, gender, and other factors. It is appropriate—and sufficient—to begin measuring growth after third grade when the expectation is that the playing field has been leveled.

Local control should prevail when measuring growth in the early grades.

While states begin to hold schools and districts accountable for performance starting in third grade, educators have already identified students requiring more instructional support using locally selected instructional assessments. These curriculum embedded assessments, as well as Curriculum Associates iReady, Renaissance Star, Dibels 8, and others, are instructional tools that help educators guide daily lesson plans and classroom activities by identifying the specific skills students have mastered to become successful readers and mathematicians.

Allowing schools and districts three years to work with students before holding them accountable provides time build important skills and level the playing field.

State-level growth models are not needed for K-2, and it should be the responsibility of the schools and districts to design their instructional strategy for the early grades.

The National Assessment of Educational Progress (NAEP) is the gold standard for measuring student achievement nationally and for state comparability. It tests a representative sample of students in selected grades every other year to produce longitudinal trends that monitor student performance.

Unless NAEP is expanded to test every student, every year, in every grade, growth should not be considered for inclusion in NAEP. Because states are already required to annually administer the same state assessment to every student in grades 3-8 and high school, it is unnecessary for NAEP to duplicate this effort.

A federal focus on growth shifts attention away from our ultimate goal, which is proficiency. There should not be a national focus on student growth. The focus should be on grade-level mastery that prepares students for success after high school.

NAEP should remain a high-level checkpoint for academic performance trends, alerting federal and state policymakers when changes in policy and instructional practices are needed.

The annual state summative assessment provides objective, comparable data that serves as the high-level, external check on local practices for improving student outcomes. The rigorous expectations and administration of the state assessment are imperative to upholding the promise of equity.

State leaders use annual state summative assessment data to measure growth for purposes of school accountability. This growth measure, coupled with data from achievement indicators, serves to identify areas of success and where further intervention and support are needed. Taken together, these high-level measures guide decisions on funding and resource allocation, state-level policy initiatives such as the adoption of science of reading principles and certification requirements, among other policy measures.

Growth can also be measured using formative assessments to help teachers guide instructional practice. Increasingly, state leaders are supporting educators by procuring formative assessments that measure growth throughout the year on specific skills, providing data to inform daily instruction. These formative assessments are important instructional tools used at educators’ discretion to improve classroom practice and identify areas where re-teaching is needed to ensure students develop the foundational skills needed to be successful at meeting grade-level standards.

Growth measures from summative assessments are used for accountability, whereas growth measured by formative assessments guides instructional practice. Summative assessments are assessments of learning and formative assessments are for learning.

Assessments of learning are the end-of-year tests that determine whether students have mastered grade-level standards. These assessments were designed to provide results for accountability and long-term planning.

Assessments for learning are shorter, generally locally selected assessments administered throughout the year. They focus on specific skills and provide teachers with a tool to determine where students are excelling or struggling. Teachers use this data to shape daily instruction and content. For example, a reading assessment for learning might test students on whether they understand that an “M” makes an “mmm” sound.

By contrast, an assessment of learning might ask a student to determine the main idea of a passage after reading.

Only assessments of learning should be used to hold schools accountable, as they were designed for that specific purpose. Assessments for learning should remain as checkpoints for used by educators for daily instructional practice.

States may provide guidance on selecting these formative assessments to ensure they are aligned to state standards and provide valid and reliable data that supports student learning. However, the administration, use and data from these formative assessments should remain the province of local discretion.

The Every Student Succeeds Act already allows states to use measures of growth in school accountability systems.

Nearly every state has implemented a growth indicator in their school accountability system, making additional federal incentives to focus on growth unnecessary.

Proficiency should be the primary focus of federal policy. It is what NAEP measures and, ultimately, the goal for all students. If a federal incentive is provided, it should encourage—or require—states to use criterion-based growth models in school accountability systems to ensure students are making progress toward proficiency.

We know all students can learn, and a strong accountability system must capture achievement and criterion-based growth measures. The goal is for all students to perform at grade-level proficiency, though the reality is that many are not. Measuring achievement illustrates whether students have mastered grade-level standards, which is the ultimate goal, but proficiency alone does not provide a complete picture.

Using a criterion-based growth measure levels the playing field so that schools are not advantaged or disadvantaged based on the students who attend. The growth component requires schools demonstrate that all students, both high-achieving and low-achieving, have made at least a year’s worth of progress in a year’s time.

Growth ensures schools earn credit for making progress with students who entered their school below grade level but have not yet achieved grade-level performance, while also applying pressure on schools who have high-performing students to sustain those students’ efforts.

Achievement and criterion-based growth should be equally balanced in accountability systems for elementary and middle schools. Weighing growth greater than proficiency leads schools to focus on improvement rather than the ultimate goal: all students reaching grade-level proficiency.

States that place excessive emphasis on growth may find themselves rating a school an A or B despite having very few students achieving on grade level, which undermines the credibility of the accountability system.

High school accountability systems should operate slightly differently, with greater emphasis on outcomes that prepare students for post high school opportunities. A greater focus on achievement in high school ensures students meet grade-level expectations that prepare them for graduation and beyond.

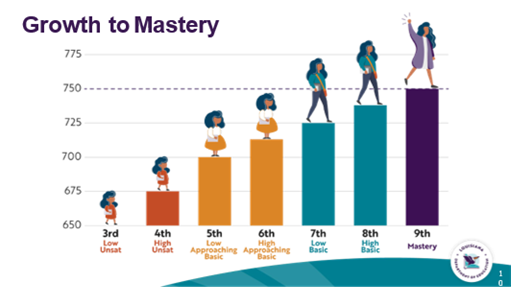

When measuring growth, Growth Toward Proficient models measure whether a student mastered more grade level content in the current year versus the prior year.

These models use a calculation that measures Growth Toward Proficient and Advanced achievement that measures students’ year-to-year growth and compares it to an established expectations such as a preset number of points or advancement to a higher achievement level.

The model measures the change in an individual student’s year-to-year test scores. For example, the change in a student’s test score from the third grade reading test to the fourth grade reading test.

That change in the test score is then compared to a preset number of points to determine if the student made Growth Toward Proficient or made advanced achievement.

States like Florida, Mississippi and Louisiana measure growth using this calculation.

| Florida. Percent of students who, from one year to the next: Increase to higher achievement levelIncrease within achievement levels 1 and 2 Remain at level 3 or 4 AND had a higher scale scoreRemained at level 5 | Mississippi. Percent of students who, from one year to the next: Increase within the lowest three performance levels (ex. increase from bottom half of Basic to top half of Basic) Increase to higher performance levelRemained at Proficient or at Advanced | Louisiana. Percent of students who, from one year to the next: Grow from low half to high half of Unsatisfactory, Basic, Approaching BasicImprove to the next higher achievement levelGrow at least one scale point within MasteryRemained at Advanced |

If students meet the growth expectations described for Growth Toward Proficiency every year, they will reach grade-level proficiency.

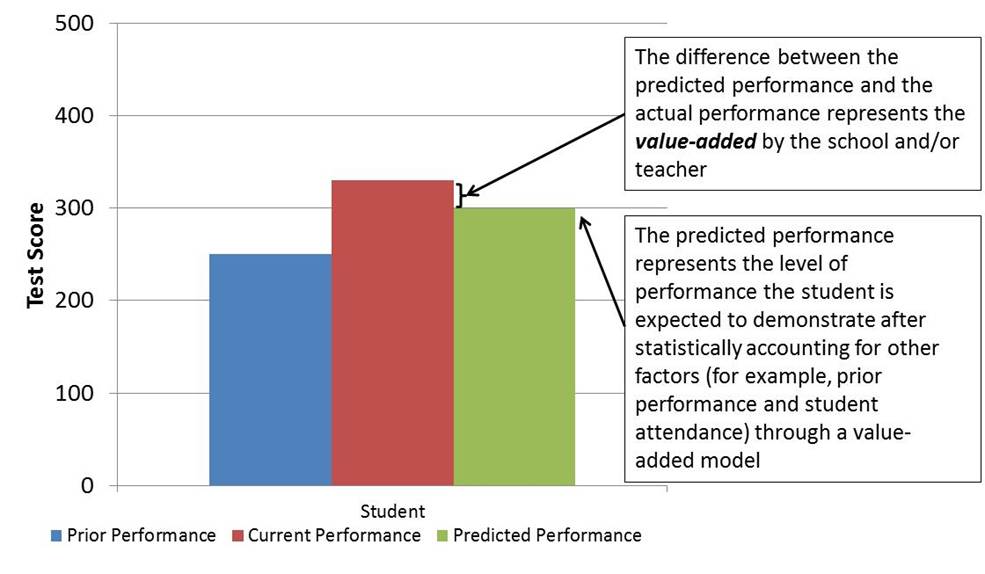

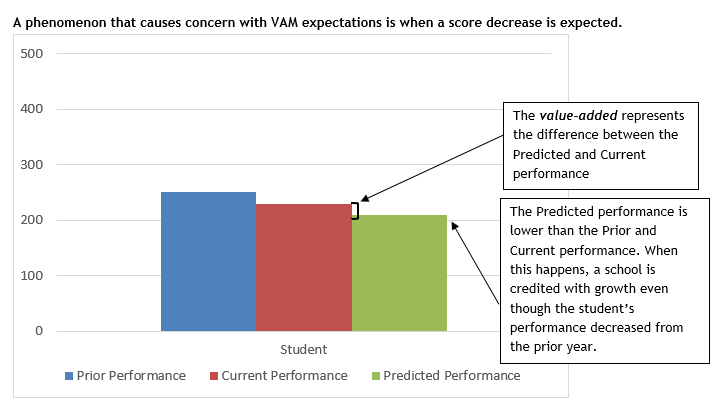

Value-added models are a normative way of measuring growth using a statistical model that estimates the portion of the individual student’s growth from year-to-year that is attributable to the school.

Value-added models annually estimate how much each student is predicted (expected) to learn from year-to-year. The predicted performance is based on past performance and potentially other factors or characteristics such as age, gender, coursework, disability status, class compositions and attendance. These values are calculated annually by grade and subject and change dependent on student outcomes within that year.

The student’s current performance is then compared to expected performance to determine how much “value” was added by the school.

If the student performs better than expected, then the amount that the student surpasses the expectation is considered “value-added” and growth is attributed to the school.

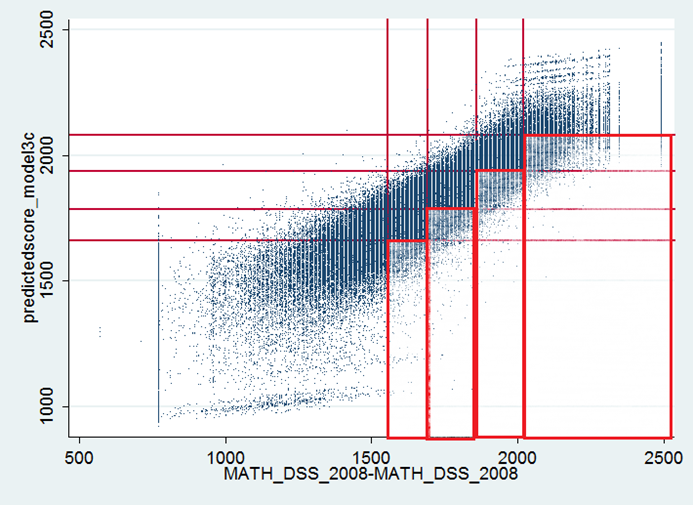

The next example is actual state-level data from math for grade 7, showing the prior score on the x-axis and the expectation on the y-axis.

The boxed and shaded area—the lower right quadrant—marks all the students who are “expected” to decrease an achievement level.

Even if negative growth is expected, as was the case with this actual state-level example, a school can still be deemed as achieving growth because the actual decrease in student scores was less than what was expected from the value-added model calculation.

Simplified VAM example with negative growth

| Scenario 1 – Expectations Based Exclusively on the Model | |||

| Expected “Growth” per the VAM | Actual “Growth” | Margin by Which Actual Beats Expected | |

| Student A | -10 | -5 | 5 |

| Student B | -50 | -40 | 10 |

| Student C | -40 | -10 | 30 |

| Student D | -80 | -70 | 10 |

| Student E | -55 | -40 | 15 |

| Student F | -5 | -2 | 3 |

| Student G | -20 | -10 | 10 |

| Student H | -15 | -9 | 6 |

| Student I | -11 | -8 | 3 |

| Student J | -29 | -23 | 6 |

| Total “Growth” for all Students | 98 | ||

| Schools Average Growth | 9.8 | ||

In the next scenario, the state limits the expected growth to 0 – therefore not allowing ‘negative’ expectations. Therefore, some models attempt to mitigate the impact of negative growth resulting in a very different growth score.

| Scenario 2 – Negative Expectations Raised to 0 | ||||

| Expected “Growth” per the VAM | Adjusted Growth Based on Decision | Actual “Growth” | Margin by Which Actual Beats Adjusted | |

| Student A | -10 | 0 | -5 | -5 |

| Student B | -50 | 0 | -40 | -40 |

| Student C | -40 | 0 | -10 | -10 |

| Student D | -80 | 0 | -70 | -70 |

| Student E | -55 | 0 | -40 | -40 |

| Student F | -5 | 0 | -2 | -2 |

| Student G | -20 | 0 | -10 | -10 |

| Student H | -15 | 0 | -9 | -9 |

| Student I | -11 | 0 | -8 | -8 |

| Student J | -29 | 0 | -23 | -23 |

| Total “Growth” for all Students | -217 | |||

| Schools Average Growth | -21.7 | |||

Student Growth Percentiles (SGP) are an example of normative growth using a statistical model that estimates “growth percentiles” among students who started at a similar level in order to evaluate individual student growth from year-to- year.

Growth expectations change annually because they are judged relative to that of other students and not against predetermined expectations. The growth expectations/targets are determined based on the performance of other students in the state after the state test results are returned received.

Growth expectations are set annually, after the state test results are received and shift annually based on statewide performance.

The same percentage of students make growth every year, which makes longitudinal comparisons meaningless.

| Student | 4th Grade Score | 5th Grade Score | Growth | Growth Percentile |

| Steve | 300 | 350 | 50 | 70th |

| Ann | 250 | 255 | 5 | 10th |

| John | 285 | 305 | 20 | 50th |

| Roger | 200 | 250 | 50 | 30th |

| Lyn | 325 | 340 | 15 | 90th |

Normative models like VAM and SGP consistently produce the same distribution of students making and not making growth, even during the COVID timeframe, a period in which all states’ student performance decreased. However, growth measures were demonstrating that schools were exceeding and meeting growth. In reality, schools were failing to report that students reported as meeting expectations were actually regressing in content mastery, albeit at a rate less than their peers.

Undesirable characteristics of Normative Growth models: